Deep Findings

In last month’s pilot, we stepped into the ring with something between a manifesto and a declaration. Weeks packed with AI news — mixed with two or three pages of open questions — pushed us to put it all into words in this newsletter that’s just starting to find its footing.

The weeks that followed weren’t calmer. Quite the opposite. And here in Argentina, the Atlantic Ocean suddenly became part of the daily routine — thanks to a surprising stream that revealed, in full HD and real time, the biodiversity living in the depths of a sea we barely know, despite it being one of our most popular destinations. Mind-blowing, right? First finding: sometimes the everyday is stranger than we think.

You’re probably wondering what any of this has to do with artificial intelligence and our life as a creative studio. Fair point. The answer is: more than you’d expect. Let’s start from the beginning.

A group of Argentine scientists — members of GEMPA (Grupo de Estudios del Mar Profundo de Argentina) and CONICET (Argentina’s National Scientific and Technical Research Council), along with other marine biologists and researchers — earned the chance to lead an exploration campaign across the Argentine South Atlantic Sea aboard the R/V Falkor (too), a state-of-the-art research vessel operated by the Schmidt Ocean Institute, a US-based nonprofit dedicated to scientific research. Over two weeks, the team conducted dives reaching between 900 and nearly 4,000 meters deep — made possible by ROV SuBastian, a remotely operated submarine equipped with advanced sampling technology (with minimal environmental impact) and the capability to capture ultra-high-definition footage in real time, transmitted through the Starlink network.

02/ Starfish

02/ Starfish

That’s the news. What comes next is the expression of pure awe at an unknown world they brought to our screens — images that revealed a strange and hypnotic beauty we never would have seen without those instruments. Day after day, gathered around the living room screen in our office, someone would shout “it’s starting!” and we’d all drop everything. The conversations that followed became an accelerated postgrad in marine biology — and now we’re all honorary graduates.

But what made this scientific campaign a national viral trend? Without trying to explain it fully, and only from our own reflective vantage point, we think two main factors were at play: surprise and communication. Free and fully accessible, people got to watch a place as unknown as the ocean floor unfold in real time. Beyond the curiosity, it became a nearly 24-hour companion. And in a hyperconnected but lonely present, not feeling alone becomes genuinely appealing. That connects directly to the streaming phenomenon: could it be that, surrounded by so much artificial stimulus, we find comfort in hearing humans talk about everyday things? We like having something to listen to while we do other things. And in that sense, the scientists’ voices — especially the one they nicknamed Coralina — were completely hypnotic and passionate. It didn’t matter whether they were talking about brittle stars, anemones, or Chinese GDP.

(Note to the reader: if you’re paying attention, we still haven’t mentioned AI. And yet, the wonder is total. Could it be that — as humans — beyond the scientific discovery, what we actually did was create content for it?)

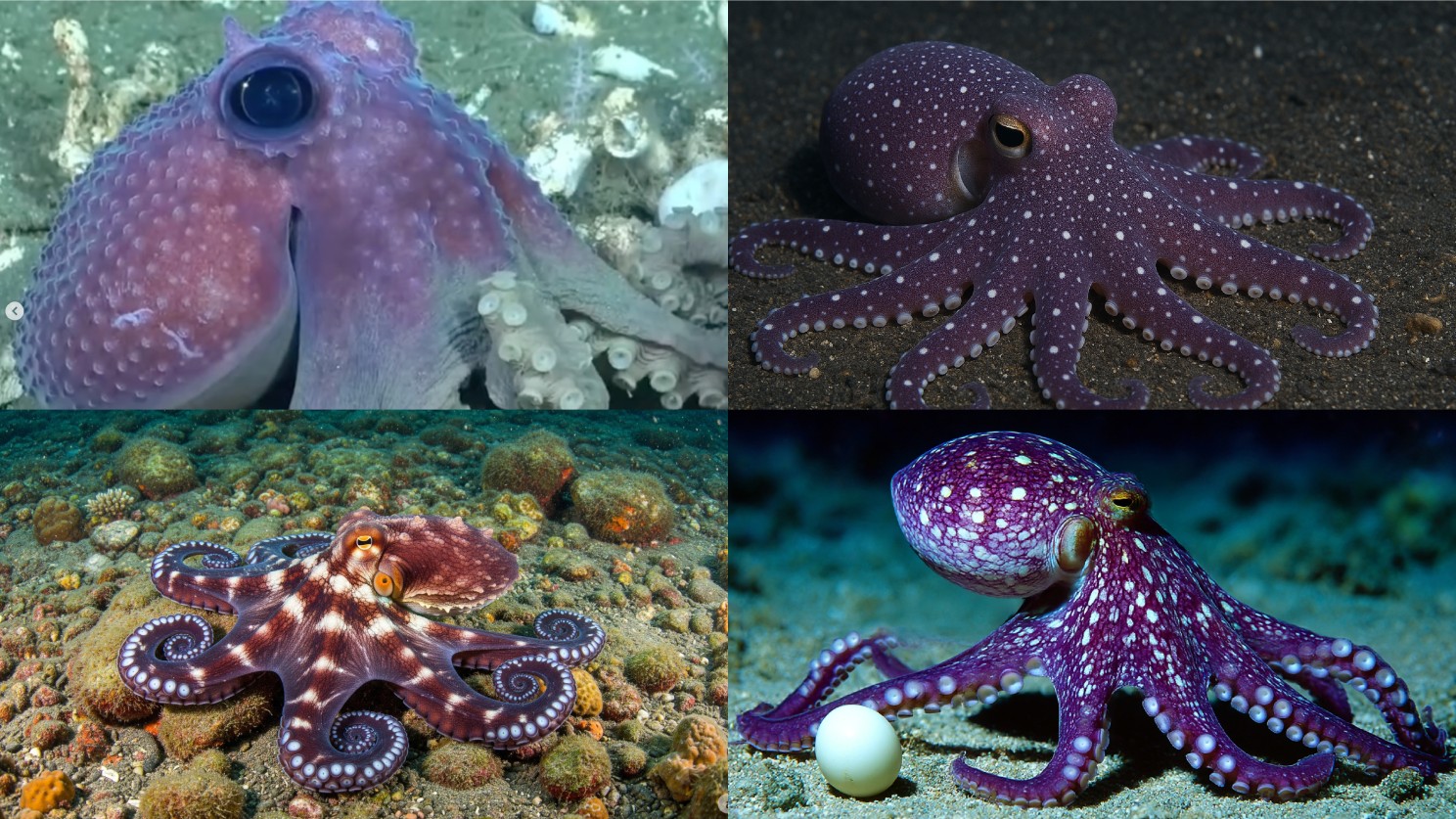

03/ Octopus

03/ Octopus

Knowing this, and if we’re allowed to connect ideas: if the Argentine deep-sea campaign hadn’t been filmed live, and therefore no record had existed, could any AI generate that knowledge or imagery without the information humans collected through years of science and technology? Second finding: we suspect AI feeds on our experiences, experiments, successes, failures, and ideas accumulated across the whole arc of human development. And far from discouraging us, that confirms our hypothesis about the value of human critical thinking — and its infinite possibilities.

Because, let’s be clear: AI didn’t travel to the bottom of the sea. We did. If it now generates content based on what was discovered — as it already has — we’re sorry to disappoint: that’s not its achievement. It’s ours. And with that, we’re not dismissing AI’s potential; we’re pointing to a reality that lets us think — among other things — about the origin of creativity. (If you want to explore this further, we promise to tackle it in future issues.)

04/ Squid

04/ Squid

But let’s get back to the heart of this issue: specifically, the question about AI’s capacity to create entirely new styles or visual universes — sparked by our curiosity around the Schmidt Ocean Institute stream. Can AI produce something without first having a human-made reference? Could it have created the look, texture and atmosphere of the South Atlantic Ocean floor without a scientist-controlled robot first showing it what’s there?

This question has been bouncing around in our heads since last issue, when we wrote:

“That’s why we created Ring-Sight — an intuitive, thoughtful logbook about the coexistence between us (the humans at Nocaut) and artificial intelligence. A kind of refuge in the competitive grind of creative work. The kind of work AI thinks it can do so easily and quickly — not realizing that its ‘brain’ was built on our experiences, our ways of inhabiting and understanding the world.”We want to share the visual work we did back then using ChatGPT and Runway. We asked ChatGPT to propose images to illustrate the text — and to give us the prompts needed to generate them. We did it because this newsletter is also a lab: a space to test tools, experiment, and learn. And it felt like the right moment to put all of that to work.

Through that process, we confirmed something: when working with generative AI, it’s not enough to say what we want to see. We also have to say how we want it to look — the graphic style, the atmosphere, the finish.

On one side there’s the idea — the scene itself — for example:

“Conceptual 3D boxing ring floating in an abstract void. Inside: human figures with artistic tools (brushes, scripts, keyboards).”But how does AI actually produce that image? First, the model trains on enormous datasets: millions of photos, artworks, and visual resources collected and classified by humans in digital libraries and archives. From that, it learns patterns and structures — how things look, how they relate to each other, how an idea gets built visually.

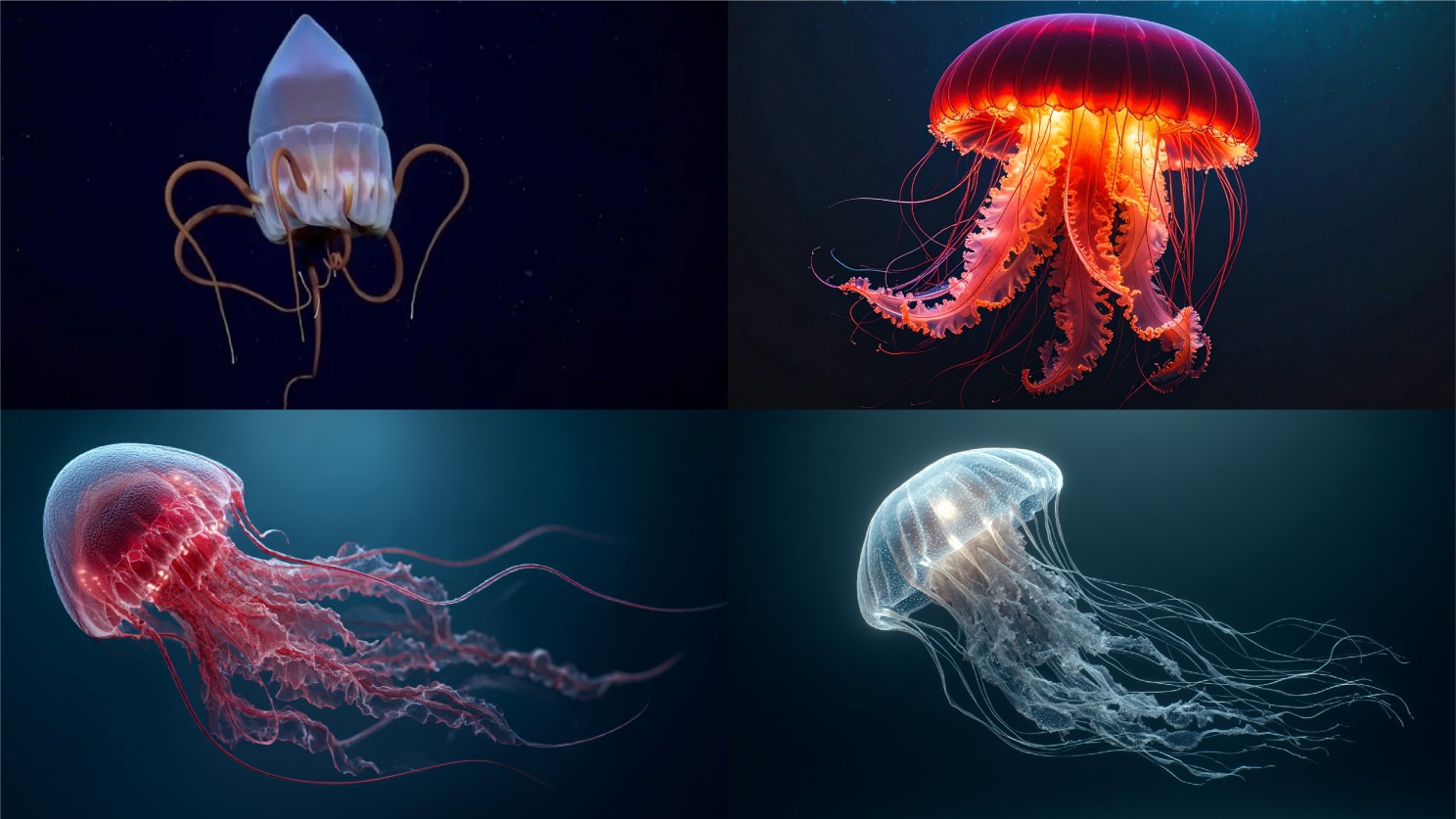

05/ Jellyfish

05/ Jellyfish

Then there’s the style. Without guidance, the AI defaults to something generic. But that’s exactly where it pays to direct it. We can go from something open — “The scene should feel symbolic, poetic and ethereal, not literal” — to very precise descriptions:

“Bold digital illustration style with saturated, vibrant colors, clean vector lines and smooth gradients. An aesthetic that combines psychedelic elements with modern pop art — hot pinks, electric blues and neon oranges against dark backgrounds. Flat design with subtle shadows and layered shapes for depth, sharp edges and high contrast. Pop surrealism, vector art, clean gradients, psychedelic fusion.”The possibilities always start from what already exists: styles, techniques, artistic movements and visual aesthetics that AI knows through its training — with humans. It doesn’t invent from scratch; it combines, mixes, and reinterprets references. We’ve already seen it flex: Ghibli, Pop Art, Comic, Pixel Art — styles that flooded social media and WhatsApp chats. But is any of those truly its own original work? Of course not.

Third finding: AI needs us.

Now we want to know what you think. And with that, we’ll leave you until next month — when we’ll keep exploring this topic with the same passion Coralina felt the first time she saw, live, the creatures she’d spent years studying in illustrated textbooks.

06/ Something like an octopus

06/ Something like an octopus

Text: Paula Caffaro / Images: Belén Ramírez

01/ AI-generated with Midjourney. Prompt: “A jellyfish floating in the dark ocean. It has one large body with long tentacles and frills around its neck, with a red coloration.”

02/ a) Expedition photograph. b, c, d) AI-generated with ChatGPT, Gemini and MidJourney respectively. Prompt: “A coral pink starfish with sharp edges and rounded corners, resting on the gray sea floor, photographed from above. The star has a textured surface that resembles soft fabric, giving it an organic feel. It is slightly glowing in shades of orange and red. There are some white algae growing around its body, adding to its natural appearance. This scene conveys calmness and tranquility, as if you were swimming near or above it.”

03/ a) Expedition photograph. b, c, d) AI-generated with ChatGPT, Gemini and MidJourney respectively. Prompt: “A purple octopus with white spots is laying on the ocean floor, holding an egg. Scientific photography, showing high-resolution images with great detail.”

04/ a) Expedition photograph. b, c, d) AI-generated with Firefly, MidJourney and ChatGPT respectively. Prompt: “A small squid swims in the dark ocean, with an aerial view of its body and tail. Scientific photography, showing high-resolution images with great detail.”

05/ a) Expedition photograph. b) AI-generated with Firefly. c, d) AI-generated with MidJourney. Prompts: “A jellyfish floating in the dark ocean. It has one large body with long tentacles and frills around its neck, with a red coloration” and “Realistic image of an adorable white jellyfish with long tentacles floating in the dark ocean, captured in high-definition images by underwater exploration teams.”

06/ AI-generated with ChatGPT. Prompt: “Grimpoteuthis imperator octopus swimming that inhabits the bottom of the cold waters of the Argentine Sea. Scientific photography, showing high-resolution images with great detail.”